A Data scientist’s abc to AI ethics, part 1 – About AI and ethics

In the daily life we constantly encounter new types of machine actors: in social media; in the grocery store; when negotiating a loan. Some of them appear amiable and friendly, but almost all are difficult to understand deeply. Their constitution may be cryptic and inaccessible.

It’s of course a subject of interest as in which ways algorithms and machine actors impact our society. Maybe they do not remain value-free or neutral in a larger context.

Philosophical and other types of interest

The Finnish Philosophical Society’s January 2019 colloquium targeted these kinds of questions. Talks concerned AI, humanity, and society at large. Prominent topics included the existence of machine autonomy and ethics. One interesting track concerned the definitions of moral and juridic responsibility. Many weighty concepts like humanity, personhood, and the aesthetics of AI, were discussed too.

From a purely philosophical perspective, technology might be viewed as one particular type of otherness. It is something out of bounds of direct personal interest.

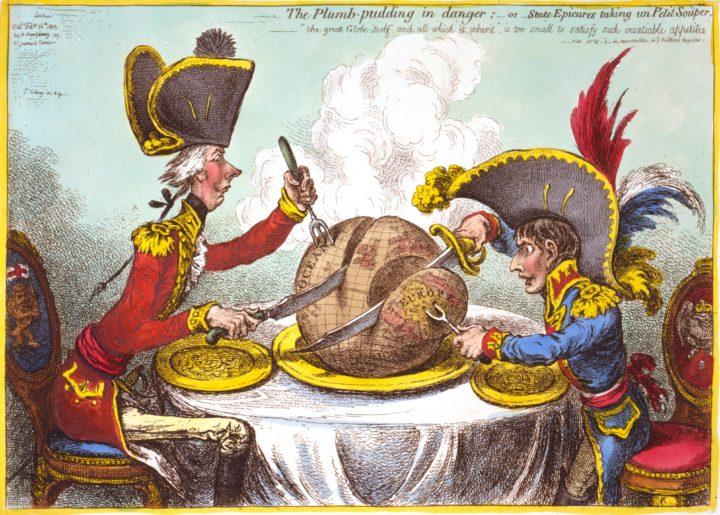

On the other hand the landscape around AI may appear supercharged at the moment. Even the word AI reveals many interest vectors. “Whose agenda does the ethics of AI in each case forward?” Maija-Riitta Ollila asked in her presentation.

No wonder many people with a technical background are a bit wary of the term. Often it would be more appropriate to use a less charged one – some good alternatives include machine learning, statistical analysis, and decision modeling.

Between AI and ethics

Most of the talks in the colloquium shared this very sensible view that AI as a term should be subject to critique. One moral responsibility then for tech people is just shooting down related hype.

But the landscape of AI and ethics is complex and controversial. As if to back this observation, many presenters in the colloquium openly asked the audience to correct them on technical points if they should go wrong.

For instance, cognitive and emotional modeling are named as two quite distinct areas of research within cognitive science and neuroscience. The first holds much more progress than the other, when we compare their achievements. Logic is relatively easier to simulate than emotional attitudes. We may equate this with the innate complexity of human action and information processing that this simulation platform only exemplifies.

Furthermore, as illustrated by many intriguing thought experiments, problems arise when we try to attribute an ethical or moral role to a machine actor. Some of these I’ll try to explicate in later posts.

A bit of a discomfort for me has been the relationship between AI discussion and ethics. Is the talk always morally sound? Sometimes it felt that ethics won’t fit into the world of AI marketing. If I should define ethics with a few words, I would probably state that it is deep thinking about prevalent problems of good and bad.

Some wisdom about AI

We may juxtapose this with a punchline about contemporary AI. “[The] systems are merely optimization machines, and ultimately, their target is optimization of business profit”, one fellow Data scientist wryly commented to me.

So on the surface level, computer science and mathematical problems might not connect to ethics at all. The situation may be alike in sales and marketing. Also in philosophy, formal logic on the one hand and ethics and cultural philosophy on the other are largely separate areas.

What to make of this divide? My next post will examine popular perceptions of AI in the wild.

This is the first of four posts that will handle the topics of AI and ethics from a bit more technical angle.