Pose detection to help seabird research – Baltic Seabird Hackathon

Baltic Seabird Hackathon

Some weeks ago we decided to participate in the Baltic Seabird Hackathon in Gothenburg. Hackathon was organised by AI INNOVATION of Sweden, Baltic Seabird Project, WWF, SLU and Chalmers University of Technology. In practise we spent few weeks preparing ourselves, going through the massive dataset and creating some models to work with the data. Finally we travelled to Gothenburg and spent 2 days there to finalise our models, presented the results and of course just spent time with other teams and networked with nice people. In this post we will dive a bit deeper on the process of creating the prediction model for pose detection and the results we were able to create.

Initially we didn’t know that much about seagulls, but during the couple weeks we got to learn wonderful details about the birds, their living habits and social interaction. I bet you didn’t know that the oldest birds are over 45 years old! During the hackathon days in Gothenburg we had many seabird experts available to discuss and ask more challenging questions about the birds. In addition we were given some machine learning and technical experts to support the work in the provided data factory platform. We decided to work in AWS sandbox environment, because it was more natural choice for us.

Our team was selected to have cross-functional expertise in design, data, data science and software development and to be able to work in multi-site setup. During the hackathon we had 3 members working in Gothenburg and 2 members working remotely from Sweden and Finland.

So what did we try and achieve?

Material available

For the hackathon we received some 2000 annotated images and 100+ hours of video from the 2 different camera locations in the Stora Karlsö island. Cameras were installed first time in 2019 so all this material was quite new. The videos were from the beginning of May when the first birds arrive to the same ledge as they do every year and coveraged the life of the birds until beginning of August when most of them had left already.

The images and videos were in Full HD resolution i.e. 1920×1080, which gives really good starting point. The angle of the cameras was above and most of the videos and images looked like the example below. Annotated birds were the ones on the top ledge. There were also videos and images from night time, which made it a bit more harder to predict.

Our idea and approach

Initial ideas from the seabird experts were related to identifying different events in the video clips. They were interested to find out automatically when egg was laid, when birds were leaving and coming back from fishing trips and doing other activities.

We thought that implementing these requirements would be quite straightforward with the big annotation set and thus decided to try something else and took a little different approach. Also because of personal interests we wanted to investigate what pose detection of the birds could provide to the scientists.

First some groundwork – Object detection

Before being able to detect the poses of the birds one needs to identify where the birds are and what kind of birds there are. We were provided with over 2000 annotated images containing annotations for adult birds, chicks and eggs. The amount of annotated chicks and eggs was far less than adult birds and therefore we decided to focus on adult birds. With the eggs there were also issue with the ledge color being similar to the egg color and thus making it much harder to separate eggs from the ledge.

We decided to use ImageAI (https://github.com/OlafenwaMoses/ImageAI) Python library for object detection. It has been built simplicity in mind and therefore it was fast and easy to take into use given the existing annotation set. All we had to do was to transform the existing annotations into PascalVOC format. After all initial setup we trained the model with about 200 images, because we didn’t want to spent too much time in the object detection phase. There is a good tutorial available how to do it with your custom annotation set: https://github.com/OlafenwaMoses/ImageAI/blob/master/imageai/Detection/Custom/CUSTOMDETECTIONTRAINING.md

Even with very lightweight training we were able to get easily over 95% precision for the detections. This was enough for our original approach to focus on poses rather than the activities. Otherwise we probably had continued to develop the object detection model further to identify different activities happening on the ledge as some of the other teams decided to proceed.

Based on these bounding boxes we were able to create 640×640 clips of each bird. We utilised FFMPEG to crop the video clips.

Now we got some action – Pose detection

For years now there has been research and models on detecting human poses from images and videos. Based on these concepts Jake Graving and Daniel Chae have developed DeepPoseKit (https://github.com/jgraving/DeepPoseKit) for detecting poses for animals. They have also focused on making the pose detection much faster than in previous libraries. DeepPoseKit is written in Python and uses in the background TensorFlow and Keras. You can read the paper about the DeepPoseKit here: https://elifesciences.org/articles/47994

The process for utilising DeepPoseKit has 4 main steps:

-

Create annotation set. This will define the resolution and color of the images used as basis for the model. Also the skeleton (joints and their connections to each other) needs to be defined in csv as a parent-child hierarchy. For the resolution it is probably easiest if the annotation set resolution matches close to what you expect to get from the videos. That way you don’t need to adjust the frames during the prediction phase. For the color scale you should at least consider whether the model works more reliable in gray scale or in RGB color space.

-

Annotate the images in annotation set. This is the brutal work and requires you to go through the images one by one and marking all skeleton keypoints. The GUI DeepPoseKit provides is pretty simple to use.

-

Train the model. This definitely takes some time even with GPU. There is also support for augmented data, so you can really improve the model during the training.

-

Create predictions based on the model.

You can later increase the size of the annotated set and add more images to the set. Also the training can be continued based on existing model and thus the library is pretty flexible.

Because the development of DeepPoseKit is still in the early phases there are at least 2 considerable constraints to remember:

-

Library can only detect individual poses and if you have multiple animals in the same frames, you need some additional steps to separate the animals

-

DeepPoseKit only supports image resolutions that can be repeatedly divided by 2 (e.g. 320×320, 640×640)

Because of these limitations and considering our source material, we came up with following process:

So we decided to create separate clips for each identified bird and run pose detection for these clips and then in the end combine the individual pose detection predictions to the original video.

To get started we needed the annotation set. We decided to use the provided sample script (https://github.com/jgraving/DeepPoseKit/blob/master/examples/step1_create_annotation_set.ipynb) that takes in a video and picks random frames from the video. We started originally with 100 images and increased it to 400 during the hackathon.

For the skeleton we ambitiously decided to model 16 keypoints. This turned out to be quite a task, but we managed to do it. In the end we also created a simplified version of the skeleton and the annotations including only 3 keypoints (beck, head and tail). The original skeleton included eyes, different parts of the wings and legs.

This is how the annotations for complex skeleton look like:

The simplified skeleton model has only 3 keypoints:

With these 2 annotation sets we were able to create 2 models (simple with 3 keypoints and complex with 16 keypoints).

To train the model we pretty much followed the sample script provided by the developers of DeepPoseKit (https://github.com/jgraving/DeepPoseKit/blob/master/examples/step3_train_model.ipynb). Due to limited time available we did not have time to work so much with the augmented data, which could have improved the accuracy of the models. Running a epoch with 45 steps took with AWS p3.2xlarge instance (1 GPU) about 5-6 minutes for the complex model. We managed to run around 45 epochs in total given a final validation loss around 25. Because the development is never a such straightforward process, we had to start the training of the model from scratch few times during the hackathon.

The results

When the model was about ready, we run the detections for few different videos we had available. Once again we followed the example in DeepPoseKit library (https://github.com/jgraving/DeepPoseKit/blob/master/examples/step4b_predict_new_data.ipynb). Basically we ran through the individual clips and frame by frame create the skeleton prediction. After we had this data together, we transformed the prediction coordinates resolution (640×640) to match the original video resolution (1920×1080). In addition to the original script we fine-tuned the graphs a bit and included for example order number for each skeleton. In the end we had a csv file containing for each identified object for each frame in the video for each identified skeleton keypoint the keypoint coordinates and confidence percentage. We added also the radius and degrees between keypoints and the distance of connected keypoints. Radius could be later used to analyse for example in which direction the bird is moving its head. In practice for one identified bird this generated 160 rows of data per second. Below is a sample dataset generated.

The results looked more promising when we had more simple setup in the area of the camera. Below is an example of 2 birds’ poses visualised with the complex model and the results seems quite ok. The predictions follow pretty well the movement of a bird.

The challenges are more obvious when we add more birds to the frame:

The problem is clear if you look at one of the identified bird and it’s generated 640×640 clip. Because the birds are so close to each other, one frame contains multiple birds and the model starts to mix parts of the birds together.

The video above also shows that the bird on the right upper corner is not correctly modeled when the bird expands its wings. This is just an indication that the annotation set does not include enough various poses of the birds and thus the model doesn’t learn those poses.

Instead if we take the more simple model in use in the busy video, it behaves a bit better. Still it is far from being optimal.

So at this point we were puzzling how to improve the model precision and started to look for additional methods.

Shape detection to the help?

One of the options that came to our mind was to try leave only the identified bird visible in the 640×640 frames. The core of the idea was that when only individual bird would be visible in the frame then the pose detection would not mess up with other birds. Another team had partly used this method to rule out all the birds in the distance (upper part of the image). Due to the shape of the birds nothing standard such as vignette filter would work out of the box.

So we headed out to look for better alternatives and found out Mask RCNN (https://github.com/matterport/Mask_RCNN). It has a bit similar approach to the pose detection that you first have to annotate a lot of pictures and then train the model. Due to the limited time available we had to try using Mask RCNN just with 20 annotated images.

After very quick training it seemed as the model had really low validation loss. But unfortunately the results were not that good. As you can see from the video below only parts of the birds very identified by the shape detection (shapes marked as blue).

So we think this is a relevant idea, but unfortunately we didn’t have time to verify this idea.

Another idea we had was to detect some kind of pattern that would help identify the birds that are a couple. We played around with the idea that by estimating the density map of each individual bird we could identify the couples that have a high density map. If the birds are tracked then the birds that have a high density output would be classified as a couple. This would end up in a lot of possibilities for the scientists to track the couples and do research on their patterns. For this task first thing we have to do is to put a single marker on each individual bird. So instead of tagging the bird as a whole, we instead tag the head of the bird which is a single point. Image the background as black and the top of the head of each individual bird is marked with the color. The Deep learning architectures used for this were UNET and FCRN(Fully Convolutional Regressional Networks). These are the common architectures used when estimating density maps. We got the idea from this blogpost(https://towardsdatascience.com/objects-counting-by-estimating-a-density-map-with-convolutional-neural-networks-c01086f3b3ec) and how it is used to estimate the density maps which is then used to count the number of objects. We ended up using this to identify both the couples and the number of penguins. Sadly the time was not enough to see some reasonable results. But the idea was very much appreciated by the judges and could be something that they could think about and move forward with.

Another idea would be to use some kind of tagging of the birds. That would work as long as the birds remain on the ledge, but in general it might be a bit challenging as the videos are very long and the birds move around the ledge to some extent.

What next?

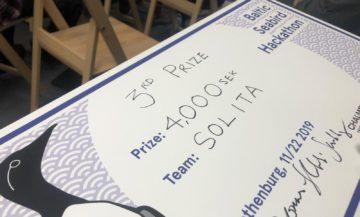

Well with the provided results we won the 3rd price in the hackathon. According to the jury biggest achievements were related to the pose detection and the possibilities it opens up for science. It seems that there is not much research done for the social interactions of seagulls and our pose detection model could help on that.

It was clear for all of us that we want to donate the money and have now decided to give it as a scholarship for a student who will take the models and work them further for the benefit of seabird science. Will be interesting to see what the models can tell us about the life of the baltic seabirds, their social interaction and in general socioeconimics of Baltic Sea.

On behalf our Team Solita (Mari Harju, Jani Turunen, Kimmo Kantojärvi, Zeeshan Dar and Layla Husain),

Kimmo & Zeeshan