Positioning data build tool, dbt, in the data tooling landscape

There’s a lot of buzz going around the data build tool, or dbt. It has gained a lot of traction. We’ve seen some projects and offers, where dbt is already chosen as the tool to be used. But what dbt actually is? Where can you position it in the data tooling landscape? What does it actually do, what it sort-of does, and what it doesn’t do? This blog post focuses on how to position dbt, what are its strengths and weaknesses, and how you should compare it to other tools in the market.

What is data build tool?

Data build tool, dbt for short, is a tool to manage and, to an extent, orchestrate the transformations inside a database. It’s an open source command line tool written in Python. Every entity is defined as a “SELECT”-statement in SQL with the possibility to use Jinja templating. Configurations are written in yml files. It’s a “unixey” command line tool designed to do one task, “Transformations” of “ETL”, do them well, and provide easy integrations to other parts of your data toolbox.

dbt, fully supports modern cloud data warehouses: Snowflake, Redshift and BigQuery. Azure Synapse (and Azure SQL Server for that matter) is supported by community created plugins. The full list of supported databases is listed at: https://docs.getdbt.com/docs/supported-databases/

dbt comes in two flavors: “dbt core” which is the open source cli tool, and a paid “dbt cloud”. We will focus on the free open source cli version. dbt cloud offers for example ci/cd pipelines and a browser-based IDE, but runs using the same dbt core. We’ll create our own pipelines, environments and a production ready setup in a later post.

https://www.getdbt.com/product/

What dbt does well

Data build tool really focuses on doing one thing and doing it well straight from the command line. In that sense, it can be seen sort of an “Unixey” tool to do transformations inside a data warehouse. But there’s much more of what dbt does well than just being a nice cli tool.

The already mentioned data transformations are at the heart of dbt. Data is transformed step by step by simply writing the required SQL statements as “SELECT nnn, from <a reference syntax to another dbt entity>”. There is no DDL needed as dbt takes care of that based on configuration. Different SQL statements are stored in a project structure, one file per one database entity. The project and file structure is pretty much freeform, and there obviously are some design considerations to make here. More on the project structure in a later post about creating a production worthy dbt environment.

As mentioned, dbt is a command line interface tool. Its setup on your own machine is really as simple as brew install dbt. Of course, you might want to run it in a virtual environment, but that’s not the point. The point is that getting dbt up and running is really simple and fast:

- Install dbt

- Setup project structure

- Setup your database connection in yml profile

- Write familiar SQL in files

- dbt run

- git commit

In addition to the entities.sql files in the project, there are .yml configurations. These provide a great way to have wide yet granular configurations on all aspects of your project. It is easy to tell staging entities to be tables in “staging” schema, transformations to be views in “transform” schema, and publish layer to be incremental loads in “publish” layer. And then, as the project progresses, you can just as easily make exceptions to these.

To make things better, the SQL used to write the transformations is spiced with Jinja-templating language. You use special syntax to reference your source tables, you can do for-loops, and what makes it especially useful, you can create macros. Macros and different packages really extend what the basic dbt can do out-of-the-box. More about packages in dbt (data build tool) – Explore Packages (getdbt.com). As an example, there’s a really nice small macro to do a “select * expect a and b columns”, which then evaluates to include all the named columns without a and b. On the other end of the spectrum, there’s a package called dbtvault, which contains plenty of macros to aid in creating a Data Vault in Snowflake using dbt.

Besides transformation, dbt does data testing. As with the transformations, you can write your own SQL or use ready-made statements like “unique” or “not_null” to test for those properties in any table/column.

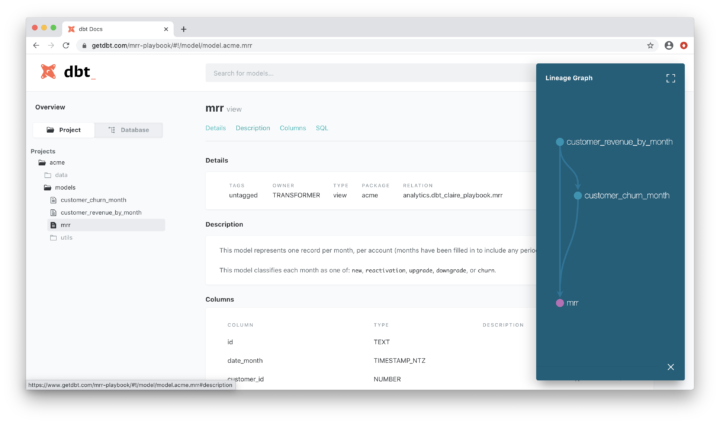

Last thing dbt does really well is the documentation. There’s a built-in documentation generation, which documents the current project and SQLs as a static webpage, which can then be viewed either locally or put up on a simple Blob Storage or S3 (since it’s a static webpage).

Documentation | dbt Docs (getdbt.com)

To put it short, dbt really shines in doing the transformations. The transformation capabilities are greatly enhanced with Jinja templating, macros and hooks (different times to fire a macro or SQL, like “before a table” or “at the end of a run”). To top it off, built-in testing capabilities and the documentation generation make it really easy to start building a data warehouse.

What dbt sort-of does

In addition to the transformations, there are some other capabilities data build tool can do, but you might find them quite lacking quite quickly: Orchestration and SQL generation.

The orchestration here is a bit of a mixed bag. dbt can do “runs” against a target database, running all the transformations you tell it to run using a pretty simple syntax or the whole project. But any more fine grained workflow orchestration requires a lot more work and moving parts than just dbt. Say you want to run one source system data on an hourly schedule, the rest daily, and do the runs going “run stage -> test stage -> run transform -> test transform -> run publish -> test publish -> switch to new publish”. Pretty basic need for a larger setup, but pretty difficult, if not impossible, to achieve with (the current version) of dbt. That is not to say you can’t use dbt to orchestrate your runs, you can and probably should start with it. But you should be aware of the limitations when the business requirements come.

The other lacking part is the SQL generation. This has partly to do with the SQL, Jinja, macros and packages. They do expand on the capabilities, but out-of-the-box, dbt doesn’t contain any SQL generation capabilities besides DDL. Say you want to have a common structure and metadata columns for all staging and publish tables: you either need to find a package for it, or create your own macro. Of course this is a double edged sword: dbt is really expandable in this way, but limited out-of-the-box.

What dbt doesn’t do

Data build tool is not an integration tool. Its only focus is managing things inside a data warehouse. What this means is that you should manage your data loads from storage to the database somewhere else, and you should also account for your persistent staging somehow.

dbt is built on the assumption that you can always completely rebuild your data warehouse from the source data. This comes up here and there when you get to the details of running a dbt project, but perhaps the biggest thing to note here, together with the lack of integrations, is how to account for persistent staging.

This means that not only you need to have a persistent staging available for dbt to rebuild the database, you also need to manage it outside dbt since you really can’t run integrations with it. This comes down to managing landing tables and populating those tables with some other tool, and possibly integrating that other tool with dbt for seamless scheduling.

As stated earlier, dbt is a cli tool using file based project structure to manage your data warehouse. What this means is that dbt in itself doesn’t contain any way to manage deployments to different environments or anything. For this, you need to build your version control and ci/cd pipelines. This, again, is something for the next part of this blog.

Things to consider

Then there are things you need to consider in your project and in the environment in which you develop. Not the dev/qa/prod environments, but the size, number and capabilities of developers and so on. As a “Unixey” tool with pretty much no guidance, there are some aspects which come in to play in different sized projects and different environments.

For larger projects and large and/or multiple teams, using dbt can have it’s own problems. For larger projects, you will need to design your project structure in a way that it supports your development. Having a huge all-in-one dbt project has its own pros and cons: consistent way of using dbt, common packages and macros, managing a huge repository, deploying consistently working versions and dbt performance (as of writing this blog post). Splitting a data warehouse project into multiple dbt projects again has its own problems: not having all of the entities available for simple ref-syntax, managing packages and macros among projects, deploying consistently working versions to name a few.

Another thing to consider is the fact that dbt works straight on top of the target database. There are no abstraction layers between. At first, this might seem like not a big deal, but what this means is that you need to have some understanding of the underlying database when using dbt: even if you are writing the SQL with Jinja, what you type is what you get. There are no abstractions, no SQL generators based on the target database engine. Out-of-the-box. Again, you can create your own macros and use packages. Your SQL files are the entities, there are no more abstract models and the relations between them: it’s “select nn from aa”.

So when to use dbt?

As presented above, dbt shines in doing (mostly) one thing and one thing only: managing the SQL transformations on your data. It’s fast to set up, excels at creating transformations and a data lineage based load ordering is easy. You can write data quality tests. And you even have a way to execute those runs and tests.

Equally important are the parts that dbt doesn’t do anything about: it doesn’t do integrations. It doesn’t do load orchestration.

And you should also know the pitfalls: you should plan and divide your dbt project in advance. Manage testing and releasing new builds. How to keep scaling and making sure that all isn’t behind “the-one-dev-that-set-it-all-up and knows everything about dbt”?

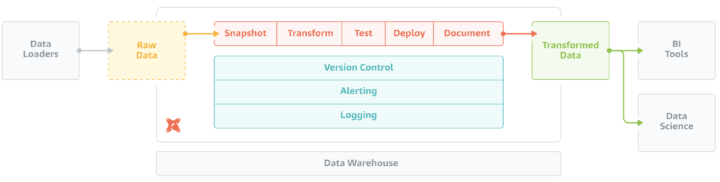

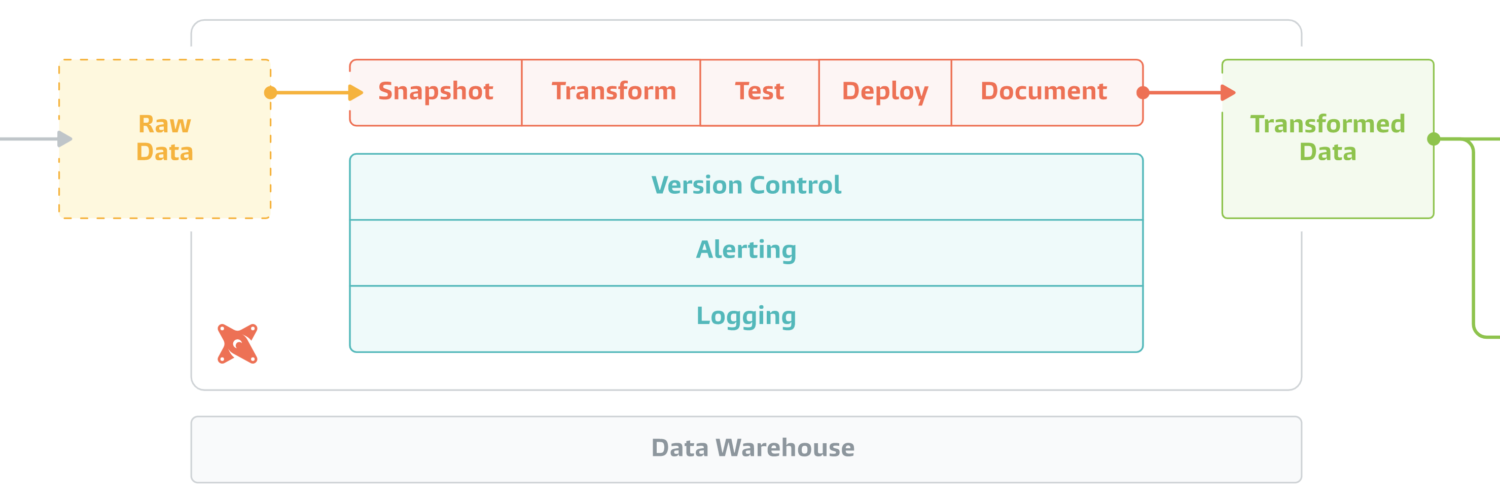

So in conclusion, dbt really is a “Unixey” tool. It does transformations and their management from the command line and provides integration points for tools before and tools after it in the load chain. To have a working DataOps toolbox in addition to just the cli dbt you would require at least:

- Version control and the processes surrounding it

- Managing different versions in different target environments

- Source data integrations and data loads

- Creating and managing data load workflows

But this is not all bad. As with all projects and business cases, you want to slice them up into smaller and more manageable parts: in a sense, dbt does just that with focusing on being only one tool in the toolbox.

This also means that you can’t easily compare just dbt to tools like Fivetran, Matillion, WhereScape, Airflow, Azure Data Factory or Solita Agile Data Engine. You need to know what are the capabilities that are missing from your data project toolbox, what capabilities different tools bring to the table, and what the benefits and drawbacks of each are. And if you don’t know what capabilities you should have, go check out Vesa’s nice post about DataOps platforms: What to look for in a DataOps platform? – Solita Data